Why AI Will Never Replace Physics-Based Engineers

The Irreducible Gap Between Digital Pattern-Matching and Physical Reality

INVENTOR’S MIND BLOG

Why AI Will Never Replace Physics-Based Engineers

The Irreducible Gap Between Digital Pattern-Matching and Physical Reality

Herbert Roberts, PE | Inventor’s Mind | inventorsmindblog.com

The Question Nobody in Silicon Valley Wants to Answer

There is a narrative gaining momentum in technology circles—one which holds that artificial intelligence will eventually replace all engineers. The claim is seductive in its simplicity, which is precisely why it is wrong. It confuses two fundamentally different activities: (a) writing instructions for machines that operate in constructed digital environments, and (b) designing, analyzing, and certifying hardware that must survive the unforgiving physics of the real world.

The distinction matters because it determines who is actually at risk. Software engineers operate entirely within digital space—their inputs are digital, their processes are digital, their outputs are digital, and their validation is digital. AI lives natively in that space. A coding agent can already write, test, deploy, and iterate code without ever leaving the environment it was born in.

The Stolen Title

I want to make a distinction that I am going to be very careful about — because it is easy to make this argument badly, and making it badly obscures the real point.

The issue is not the people. The people who write software for a living include some of the most technically rigorous and genuinely brilliant minds working in any field today. Software is a collection of logic statements used to define one or more solutions to resolve a set of decisions. The computational problems they solve are real problems. The systems they build are complex systems. This post is not an attack on any of them as individuals or as a profession.

The issue is the word.

Software development does not carry a P.E. license. It does not require one. The consequences of a failed software deployment — as real and as serious as those consequences can be — are generally not measured in structural collapses, aircraft accidents, or the catastrophic failure of life-safety systems. The accountability structure is different. The legal framework is different. The professional standard is different.

When the title engineer detaches from the accountability structure that gives it meaning, the word stops doing the work it was designed to do. The public hears engineer and reasonably infers: licensed, accountable, bound by professional standards to the safety of the people who depend on this work. That inference is correct when the word is used correctly. It is incorrect — and the incorrectness has real consequences — when the word is used as a general descriptor for anyone who solves technical problems on a computer.

The Roman engineers who designed the aqueducts did not get to blame the software. They did not get to issue a patch. They did not get to pivot to a new architecture after the first version failed. They built it right the first time because the alternative was catastrophic and permanent and entirely their fault.

That standard — build it right, own the consequence, no patch available — is what the word engineer carries. When the word travels without the standard, the standard gets lost. And the standard is the part that protects people.

Physics-based engineers—mechanical, aerospace, civil, materials, chemical—work at the boundary between the digital and the physical. They model in the computer but validate against physical testing. They analyze on screen but inspect with their hands and eyes. They calculate in software but certify against regulatory frameworks built on decades of accumulated failure data. That boundary crossing, from digital to physical, is the moat that AI cannot swim.

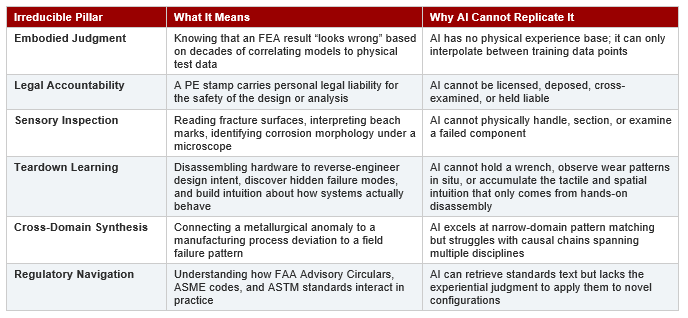

The Irreducible Gap: Six Pillars AI Cannot Replicate

To prove that physics-based engineering is not at risk of replacement, we need to identify the specific competencies that (a) define the profession, (b) depend on physical-world interaction, and (c) have no viable AI substitute on any foreseeable timeline. There are six such pillars.

Each of these pillars reinforces the others. Embodied judgment informs sensory inspection. Teardown learning feeds embodied judgment with thousands of physical observations that no simulation captures. Legal accountability demands regulatory navigation. Cross-domain synthesis depends on the accumulated experiential base that teardowns and field inspections produce. Remove any one pillar, and the engineering function degrades. Remove all six, and you no longer have engineering—you have computation.

Pillar 1: Embodied Judgment—The Knowledge AI Cannot Train On

Consider a finite element analysis of a turbine component under combined thermal and centrifugal loading. The model produces a stress distribution. A junior analyst reads the numbers. A senior engineer reads the story—and sometimes, the story the numbers are telling is wrong.

That senior engineer has spent twenty or thirty years correlating analytical predictions against physical test data. She has seen cases where the FEA showed acceptable stress but the part failed in service because the model’s boundary conditions didn’t capture a secondary load path. She has seen cases where the stress looked alarming but the material’s actual fatigue behavior, informed by metallurgical condition and surface finish, provided adequate life. This judgment—the ability to look at a computational result and say “something is wrong with this model”—is not pattern matching in the way AI performs pattern matching. It is the integration of thousands of physical observations into an intuitive framework that flags anomalies against lived experience.

AI can only interpolate between its training data. It has never touched a fractured surface. It has never felt the vibration signature of an imbalanced rotor. It has never watched a test specimen neck down before final fracture and noticed that the reduction in area didn’t match what the material certification predicted. These sensory inputs, accumulated over decades, constitute a knowledge base that exists nowhere in digital form and therefore cannot be used to train any model.

Pillar 2: Legal Accountability—The Liability AI Cannot Bear

When a Licensed Professional Engineer stamps a drawing, a report, or an analysis, that stamp carries the weight of personal legal liability. If the bridge collapses, if the pressure vessel ruptures, if the aircraft component fails—the PE who signed off is personally accountable. This is not theoretical. Engineers are deposed. Engineers testify. Engineers lose their licenses and face civil liability when their judgments prove wrong.

AI cannot be licensed. AI cannot be deposed. AI cannot be cross-examined by opposing counsel who wants to know why a particular material was selected, why a specific safety factor was applied, why an alternative design was rejected. The forensic engineering function—analyzing failures, determining root causes, translating complex technical findings into language a jury can understand—requires a human being with credentials, experience, and the ability to defend their conclusions under adversarial questioning.

Beyond the courtroom, regulatory compliance itself demands human accountability. The FAA requires a Designated Engineering Representative—a human—to approve conformity findings. ASME codes require a certified inspector—a human—to witness pressure tests. Nuclear Regulatory Commission protocols require human sign-off at every stage. These are not bureaucratic formalities; they are the mechanisms by which society ensures that someone with skin in the game is vouching for the safety of the design.

Pillar 3: Sensory Inspection—The Physical World Demands Physical Presence

Failure analysis is fundamentally a hands-on discipline. The fractographer sections the failed component, mounts it in epoxy, polishes it through progressively finer grits, etches the microstructure, and examines it under optical and electron microscopy. The fracture surface itself tells a story—beach marks indicate fatigue, intergranular facets suggest environmental attack, dimpled rupture reveals ductile overload, cleavage facets indicate brittle fracture. Reading these surfaces requires years of training and thousands of examinations.

AI can analyze images of fracture surfaces, and it will get better at this over time. But the analysis begins long before the microscope: deciding where to section, how to preserve evidence, what artifacts might be introduced by the cutting process itself, which features to document at low magnification before moving to higher magnification. The physical handling of evidence, the preservation of chain-of-custody for litigation, the judgment about what to examine and in what sequence—these are irreducibly physical activities.

Equally important is field inspection. Assessing corrosion damage on a structure requires physical access, tactile evaluation of material loss, calibrated thickness measurements, and the experiential judgment to distinguish between cosmetic surface oxidation and structurally significant section loss. No remote sensing system or AI image analysis can replicate the information content of a trained inspector’s hands-on evaluation.

Pillar 4: Teardown Learning—The Knowledge That Only Comes from Taking Things Apart

There is a mode of engineering learning so fundamental that it is almost invisible: the teardown. Engineers learn by disassembling hardware—taking apart engines, gearboxes, actuators, structures, and assemblies to understand how design intent translates into manufactured reality. This is not casual curiosity. It is a systematic, hands-on investigation that builds a form of knowledge no textbook, simulation, or AI training set can replicate.

When an engineer tears down a gas turbine, she does not simply catalog parts. She observes wear patterns on bearing races that reveal actual load paths versus predicted ones. She sees fretting damage at interfaces that tells her the clamping loads were insufficient or the surface finish was wrong. She notices discoloration gradients on hot-section components that map the real thermal field—often quite different from the CFD prediction. She finds evidence of maintenance-induced damage, assembly errors, foreign object impacts, and degradation mechanisms that no design analysis ever contemplated. Every teardown is a confrontation between the theoretical and the actual, and the engineer who has performed hundreds of them carries an experiential database that fundamentally shapes how she approaches new designs.

This teardown knowledge is cumulative and cross-pollinating. The engineer who has disassembled a failed hydraulic actuator recognizes the same seal extrusion pattern when she sees it in a pneumatic system. The metallurgist who has sectioned dozens of fatigue-cracked turbine blades immediately recognizes the initiation site morphology when it appears in a completely different alloy system. The manufacturing engineer who has torn down competitor products understands design-for-assembly tradeoffs that no parametric model captures. Each teardown deposits a layer of physical intuition that compounds over a career.

AI cannot perform teardowns. It cannot hold a wrench. It cannot feel the resistance of a press-fit bearing being extracted and correlate that interference with the specification. It cannot smell the distinctive odor of overheated lubricant that indicates a thermal exceedance event. It cannot observe, in three dimensions and real time, how components nest together, how tolerances stack, how wear patterns reveal operational history. The teardown is irreducibly physical, irreducibly hands-on, and irreducibly human.

Beyond the individual component level, teardown learning extends to system-level understanding. Disassembling an entire assembly reveals packaging constraints, routing decisions, maintenance access compromises, and design-for-manufacturing adaptations that are invisible in CAD models and engineering drawings. The engineer learns why a bracket is shaped a particular way—not from the design intent document, but from seeing that it had to clear a wire harness that was routed differently than the drawing showed. This “as-built versus as-designed” knowledge is critical for failure analysis, redesign, and root cause investigation, and it exists only in the minds of engineers who have done the physical work.

The implications for AI displacement are decisive. An AI can analyze a photograph of a disassembled component. It can identify features in a CT scan. It can process dimensional inspection data. But it cannot perform the teardown itself, and more importantly, it cannot accumulate the embodied intuition that thousands of teardowns produce. The teardown is where textbook knowledge transforms into engineering judgment—and that transformation requires hands, eyes, and decades of physical engagement with real hardware.

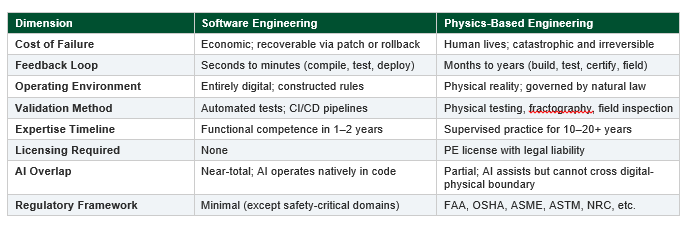

The Comparison: Why Software Is Vulnerable and Hardware Is Not

The contrast between software and physics-based engineering reveals exactly why one is at risk and the other is not. The following comparison maps the key dimensions:

The pattern is clear. Every dimension that makes software engineering accessible—fast feedback loops, low failure costs, purely digital environment, minimal regulation—is simultaneously the dimension that makes it automatable. Conversely, every dimension that makes physics-based engineering demanding—slow feedback loops, catastrophic failure costs, physical-world interaction, heavy regulation—is simultaneously the dimension that protects it from automation.

AI as Tool, Not Replacement: How Physics-Based Engineers Will Actually Use AI

This argument should not be mistaken for technophobia. AI will transform how physics-based engineers work—it already is. The key distinction is between AI as a tool that amplifies human capability and AI as a replacement that eliminates the human.

Consider the domains where AI is already proving valuable: (a) parametric design exploration, where AI can sweep through thousands of geometry variations and identify promising candidates for detailed analysis; (b) literature and standards search, where AI can rapidly surface relevant specifications, prior failure reports, and material property data; (c) automated mesh generation and FEA preprocessing, which reduces tedious setup time; and (d) TRIZ-based inventive problem solving, where AI can systematically map technical contradictions to inventive principles.

In every one of these applications, AI accelerates the engineering process without replacing the engineer. The parametric sweep still requires a human to define the design space, evaluate the results against manufacturing constraints, and select the final configuration. The literature search still requires a human to assess the relevance and applicability of the findings. The FEA still requires a human to verify boundary conditions, validate the mesh, and interpret the results against physical experience. The TRIZ analysis still requires a human to frame the contradiction correctly and evaluate whether the suggested principle applies to the specific physical system.

This is the pattern: AI handles the computation; the engineer provides the judgment. The computation is fast and getting faster. The judgment is slow and getting deeper. Both are necessary. Neither is sufficient alone.

The Expertise Compression Fallacy

Software culture has popularized the idea that expertise can be compressed into months. “World-class software engineer in six months” is a claim that circulates without irony on platforms like Substack. Read twelve books. Build projects. Ship code. The timeline is aggressive but arguably achievable—not because software people are smarter, but because the domain’s feedback loops are fast and the consequences of error are recoverable.

Physics-based engineering does not compress. You cannot become a competent metallurgist by reading twelve books any more than you can become a competent surgeon by watching twelve videos. The knowledge is embodied: it lives in the correlation between what the textbook predicts and what the test specimen actually does, accumulated over thousands of hours in laboratories, test cells, manufacturing floors, and failure investigations. This embodied knowledge is precisely what AI lacks and cannot acquire from text-based training data.

The implication is significant. If expertise cannot be compressed for humans, it certainly cannot be compressed for machines. The thirty-year expertise curve in physics-based engineering is not a bug—it is a feature. It reflects the genuine complexity of understanding how materials and structures behave under real-world conditions, which is the very complexity that insulates the profession from algorithmic displacement.

The Verdict: Protected by Physics, Secured by Accountability

The case is straightforward. Physics-based engineering is protected from AI replacement by six mutually reinforcing pillars: embodied judgment built over decades of physical-world correlation, legal accountability that demands a licensable human being, sensory inspection that requires physical presence and handling, teardown learning that builds irreplaceable intuition through hands-on disassembly, cross-domain synthesis that spans multiple scientific disciplines, and regulatory navigation that demands experiential interpretation of complex and interacting code frameworks.

AI will make physics-based engineers more productive. It will accelerate analysis, broaden design exploration, streamline documentation, and enhance quality assurance. These are genuine and valuable contributions. But the irreducible core of the profession—the judgment, the accountability, the physical-world interaction—remains firmly and permanently in human hands.

The engineers who should worry are those whose entire workflow exists in digital space, whose mistakes are reversible, and whose expertise can be acquired in months. The engineers who need not worry are those who work at the boundary between computation and physical reality, whose mistakes can be catastrophic, and whose expertise is measured in decades.

Physics doesn’t care about your algorithm. And that is exactly why physics-based engineers will still have jobs when the last line of code writes itself.

Herbert Roberts, PE • Inventor’s Mind • inventorsmindblog.com